What remains (026)

Exploring many kinds of human-machine augmentation.

I don’t know about you, but I spent my weekend developing an interactive web app from scratch. It’s worth mentioning that I don’t code. I tried learning assorted languages over the years but something about writing the code never clicked, so in practical terms I’m always left negotiating with developers rather than just building what I want. All of this changed after lunch on Saturday when I decided to implement something I’ve always wanted to build – an AI-based Tarot reading app – as an actual, working, webpage.

It is nothing short of mindblowing what it feels like to build with ChatGPT. Transformative doesn’t really begin to describe what it’s like to have a limitless assistant helping you do anything.

You have to be mindful of the size of chunks you are asking GPT to build. I made the mistake of starting with an ambitious request, and quickly realized the confident-but-wrong approach ChatGPT is known for also applies to code.

After a while you start getting the hang of how what is work asking, and how to build complexity in modules. Chunking your requests becomes second nature and you quickly realize Chat can be used to answer any question. Something isn’t working? Short of ideas of what to do next? Copywriting? Images? Troubleshooting? ChatGPT has your back.

The app is fully functional but isn’t quite ready to share, and it’s hard to convey how profound this shift feels to me. You no longer need anyone to help you do any of these things anymore. To me that meant spending the weekend intensly coding and making something work.

I have no idea how you will spend your time differently moving forward, but strongly advice investing $20 on ChatGPT Plus and investing as much attention as possible wrangling these tools to start understanding what remains.

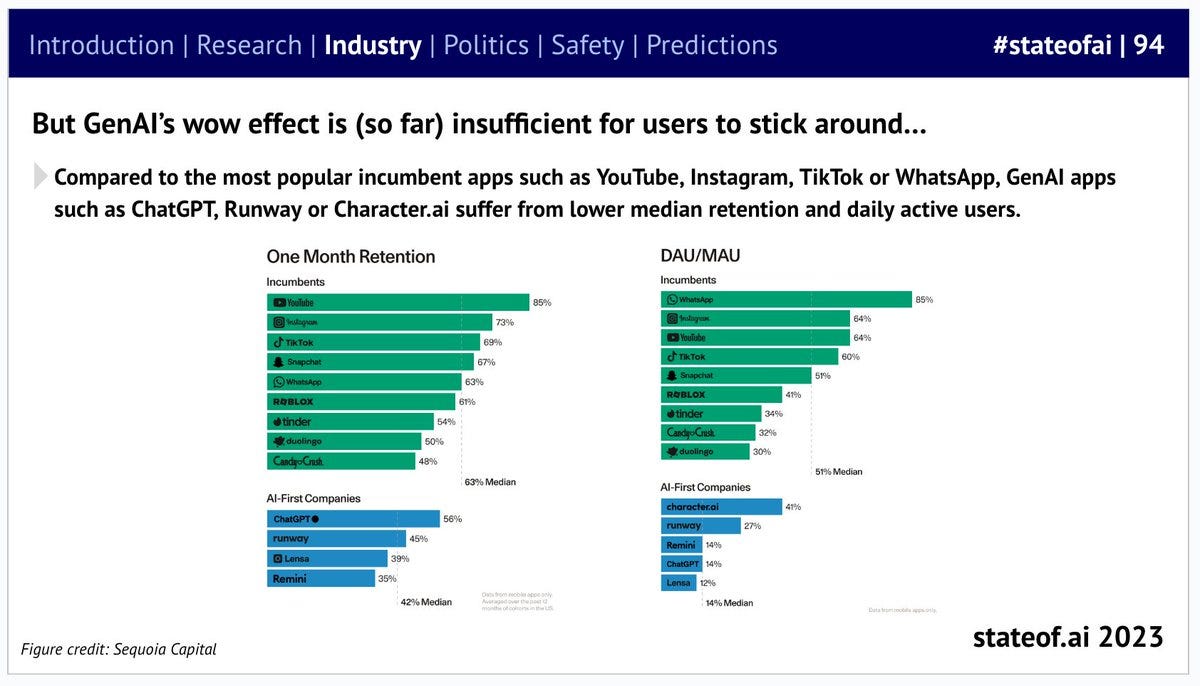

State of AI 2023 Report

Highly recommended Twitter thread and PDF download.

Molding Morality

Dario Amodi, CEO of Anthropic, and Azeem Azhar explore the frontier of crafting large language models embodying human values. The discourse traverses the terrain from the nurtured development of AI to envisaging a future with universally adopted, ethically-aligned AI systems.

Insightful Contrast: The juxtaposition of deterministic software with complex, safety-critical AI, evoking a shift from engineering to nurturing AI.

Ethical Framework: Anthropic's proactive stance towards embedding ethics, reflecting a broader industry contemplation on AI morality.

Future Vision: Anticipation of ubiquitous, trustworthy AI, underscoring the exigency of ethical considerations in AI's evolutionary trajectory

Ethics at the Helm

DeepMind's Shane Legg deliberates on the journey towards AGI, underscoring the imperative of ethical alignment in powerful machine learning systems, acknowledging the existing architectural deficiencies particularly in handling episodic memory and misinformation, and contemplating the prospect of realizing AGI by 2028.

Ethical Grounding: Emphasizes early integration of ethical values in AGI development.

Architectural Gaps: Identifies episodic memory handling as a key architectural shortfall.

2028 Horizon: Explores the plausibility of achieving AGI by 2028, promoting a cautious optimism.

Conscious Leaps: The AGI Gridlock

Joscha Bach of Google DeepMind and Roy Bahalargham from Huawei dissect the present AI landscape, distinguishing between task-oriented AI and AGI. They delve into conscious AI, exploring its potential to unlock AGI, and the critical role of language models therein. The discourse also touches on the regulatory, ethical, and existential facets of AGI, spotlighting the peril of the "black box" approach and stressing the imperative of transparency and comprehension in AGI systems.

Enlightening Divergence: The discussion unveils the nuanced differences between task-specific AI and the broader, more complex domain of AGI.

Conscious Catalyst: Conscious AI emerges as a potential harbinger for advancing towards AGI, highlighting the interplay between consciousness and artificial intelligence.

Regulatory Rigor: The dialog underscores the exigency of regulatory frameworks to navigate the ethical and existential quandaries posed by AGI.

Emerging Vocabulary

Generalization

Refers to an algorithm's ability to apply learned information from specific training instances to new, unseen examples. It's a fundamental concept in machine learning where the primary goal of any model is to achieve high predictive performance on new, unseen datasets, and not just on the data it has been trained on. Good generalization means that the model has really learned the underlying patterns in the training data and can accurately predict for unseen data, rather than just memorizing specific instances.

View all emerging vocabulary entries →

If Artificial Insights makes sense to you, please help us out by:

Subscribing to the weekly newsletter on Substack.

Following the weekly newsletter on LinkedIn.

Forwarding this issue to colleagues and friends.

Sharing the newsletter on your socials.

Commenting with your favorite talks and thinkers.

Artificial Insights is written by Michell Zappa, CEO and founder of Envisioning, a technology research institute.

You are receiving this newsletter because you signed up on envisioning.io.