Still Having a Moment (139)

Captured Voice, Reflection Loops and Trust but Verify.

This week marks three years writing Artificial Insights. In early 2023 I kept answering the same questions from friends and collaborators about what was happening in AI, and decided it was time to start writing about my impressions and expectations of a field I’ve never been more than an observer of.

Part of me felt late to the party – that everything worth saying had already been covered by more competent people, so why bother. I’m glad I committed to writing weekly about what I thought was the transition toward AGI. Forcing myself to click Publish every week means I have to put words to the vague impressions cast by the countless tweets, videos, and links crossing my feeds. Saying AI moves fast is an understatement, and there’s never a shortage of hot takes to pass along – but as promised last week, I’m trying to share more personal takes on how AI is actually shaping my own work.

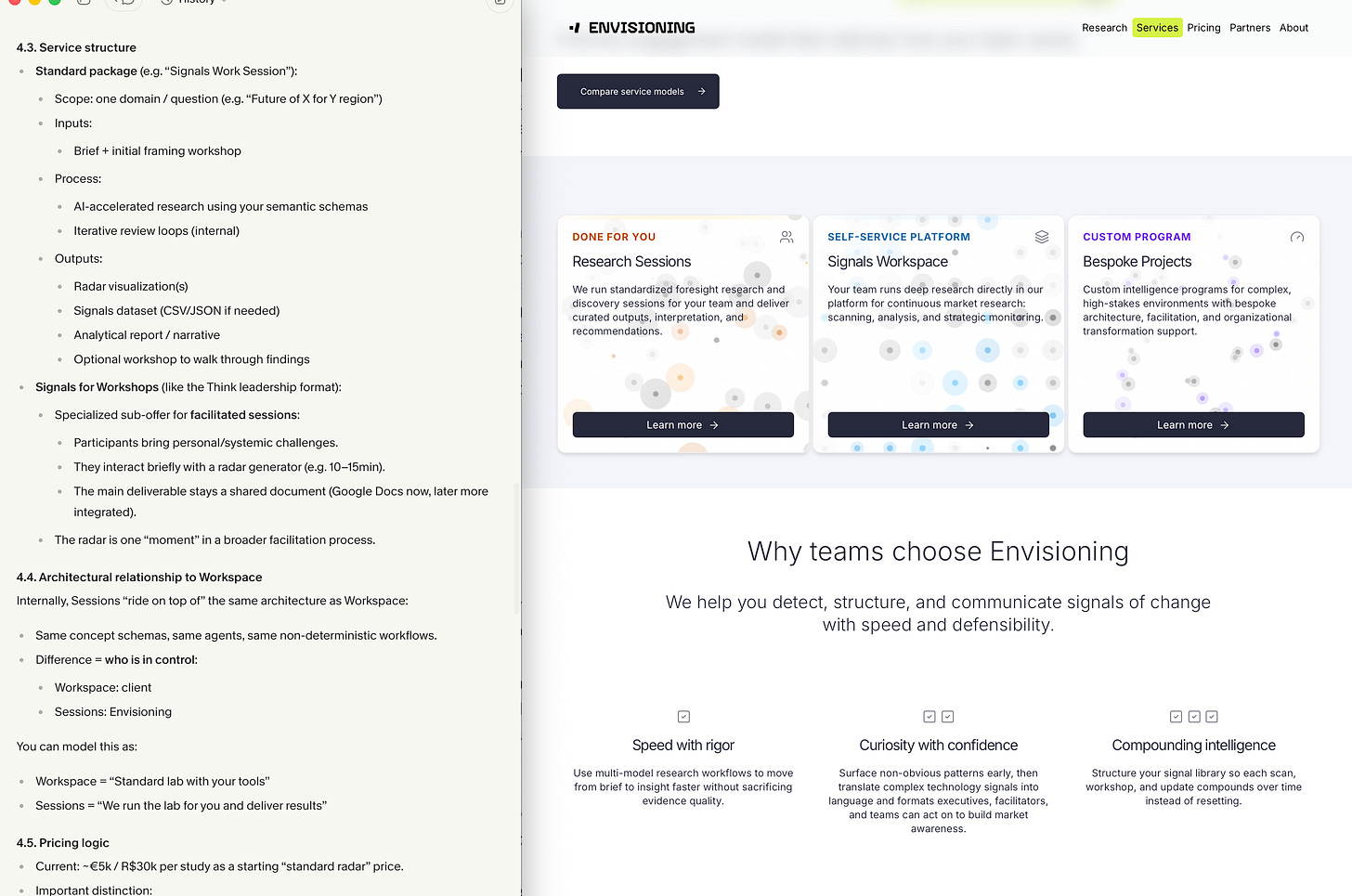

Captured Voice

Presenting our work is ongoing work. Envisioning has been around for fifteen years, but how we describe what we do has to keep evolving alongside the field, the clients, and our own understanding of the work. Writing copy from scratch tends to produce something that sounds like everyone else’s website. Last month I tried something different: I took two years of Granola transcripts – sales calls, client questions, internal development meetings, my own pitches – and fed them to a reasoning model alongside our existing site code. The prompt wasn’t “write better copy.” It was closer to “compare how we actually talk about ourselves to how the website does, and propose a data architecture and messaging that matches the former.” What came back was a revised structure for the services pages grounded in language we’d already been using, just never written down. The non-obvious part isn’t that AI can rewrite copy. It’s that the most honest source of your own voice is probably the recordings of you talking to clients, not the marketing pages you sat down to write.

Reflection Loops

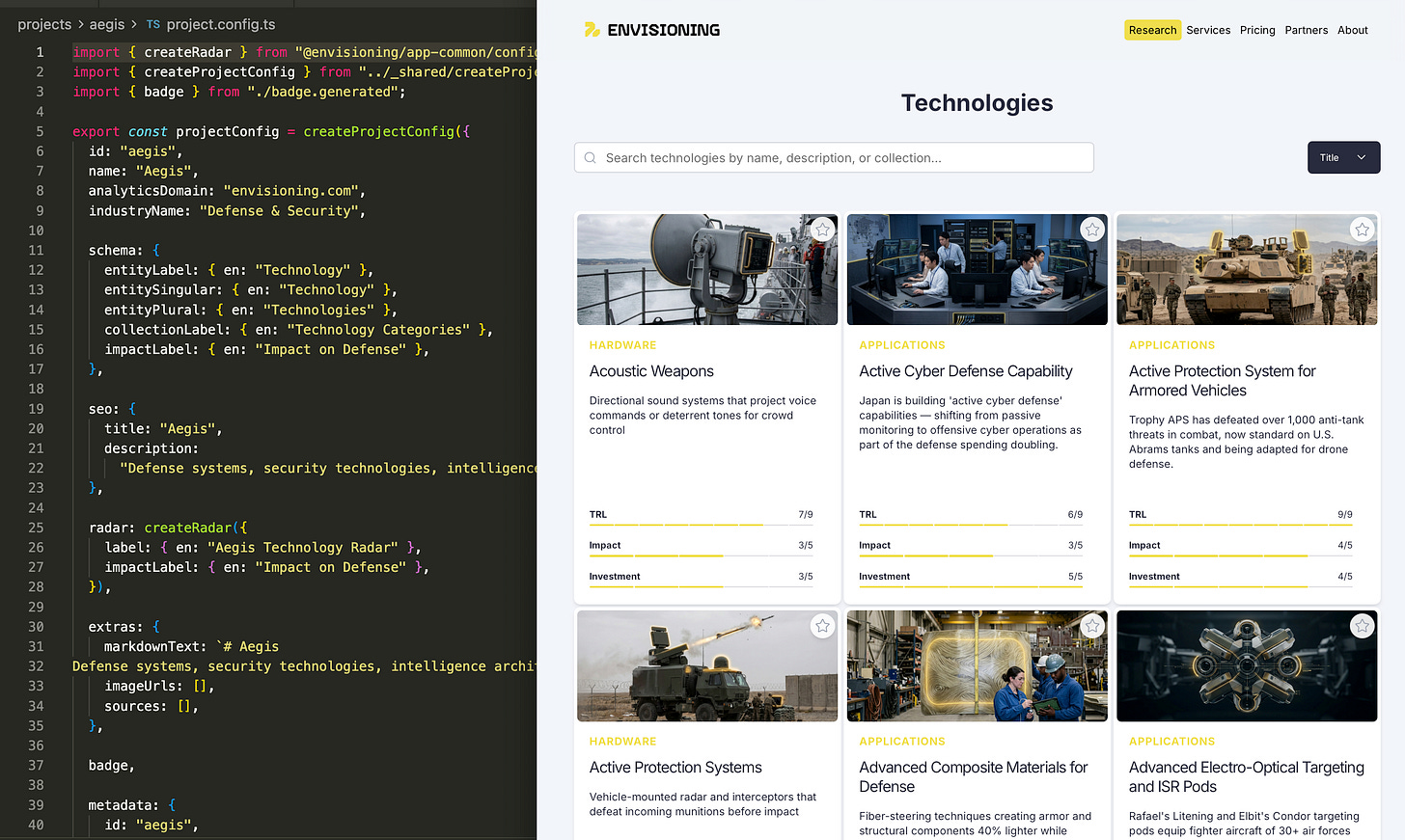

Our research hubs are produced by leaning on multiple frontier models, never just one. Sometimes in parallel – same question to three or four models, then correlate the answers. Sometimes in sequence – model B evaluates model A’s output, model C evaluates A+B, and so on until the scope is exhausted. The benefit tapers around three or four models, but it’s always meaningfully better than relying on a single one. The tools matter less than the method: I’ll do this in Cursor when I want to toggle between models manually, and we’ve productized the workflow in Signals, which orchestrates models in parallel against a shared question. Our role shifts from generator to overseer – applying methodology, defining scope, judging consensus. Hallucinations drop dramatically when models cross-check each other, because the same fabrication rarely survives three independent passes. Coverage improves, reliability improves, and the work feels less like prompting and more like running a small panel of analysts who happen to never sleep.

Trust but Verify

Both habits share a spine and a posture. I've started asking models a lot more questions about their own process before letting them act. Before any non-trivial task, I'll ask the model to repeat back what it's about to do, in detail. Sometimes that's a numbered plan, sometimes it's a summary of its understanding of the request, sometimes it's the model identifying what it thinks the key constraints are. The point is the reflection. AI is fluent in a way that makes verification feel rude; the prose is clean, the structure is reasonable, the confidence is high. But fluency isn't accuracy, and the gap between them is where the embarrassing mistakes live. Having the model surface its understanding before it acts is a cheap way to catch misunderstandings before they balloon into output you have to throw away. It feels excessive until the day it saves you, and then it feels like the bare minimum.

Until next week,

MZ

World Beautiful Business Forum (Athens)

I’m in Athens next week for HoBB. Ping me if you’re around and want to meet.

No Human Middleware (10 min)

YC Partner Diana Hu argues the org chart itself is the bottleneck: every management layer that routes information is a speed tax. The model is the intelligence layer now, and companies that keep human middleware will lose to those running closed-loop, queryable organizations.

Some companies have already pushed this to the point where their repos contain no handwritten code, just specs and test harnesses.

Alignment Is the New Bottleneck (18 min)

GitHub Labs staff researcher Maggie Appleton on why solo-agent tooling is making team software worse, not better: the time between filing an issue and an agent opening a PR is now minutes, which collapses every alignment checkpoint onto the pull request, where it was never designed to land.

Believing individual productivity leads to great software is nine women make a baby in one month logic.

Keep the Agent in the Smart Zone (97 min)

Matt Pocock, TypeScript educator turned AI workflow teacher, on why context window bloat is the root cause of agent failure: past roughly 100k tokens, quality degrades regardless of whether your window is 200k or 1M. The fix is task sizing, not model scaling.

Every time you add a token to an LLM, it’s kind of like you’re adding a team to a football league. The number of matches scales quadratically.

Benchmarks Lie, Nonsense Reveals (20 min)

Arena.ai’s Peter Gostev on BullshitBench, his 155-question eval of nonsense prompts: GPT and Gemini models accept the premise and answer roughly 50% of the time, Anthropic’s latest models push back most consistently, and extended reasoning makes the problem worse, not better.

GPT-o3 would maybe have one line where it would question the premise, then spend 20 paragraphs trying to solve it anyway.

If Artificial Insights makes sense to you, please help us out by:

📧 Subscribing to the weekly newsletter on Substack.

💬 Joining our WhatsApp group.

📥 Following the weekly newsletter on LinkedIn.

🦄 Sharing the newsletter on your socials.

Artificial Insights is written by Michell Zappa, CEO and founder of Envisioning, a technology research institute.