Babysitting the Agents (133)

They work while we watch.

Welcome to another edition of Artificial Insights, your autonomous intelligence digest. The AI news cycle shows no signs of abating, so here are some of the links that caught my attention in the last week.

A Wall Street Journal reporter noticed something new in San Francisco’s Dolores Park: people sitting next to open laptops, staring at the screen without typing. They were babysitting their agents. Close the lid and the work stops - so they sit in the sun, half-present, watching code write itself.

This image captures the week perfectly. We are in a transitional period where AI does more and more of the work, but still requires human proximity. Not supervision exactly, not collaboration either. Something new that we don’t have a word for yet.

Consider the range of stories this week. McKinsey’s internal AI platform Lilli was breached by a team that needed nothing more than a domain name and curiosity - 93,000 PowerPoint decks exposed, and an insider confirmed the platform is no longer in use. Meanwhile, Meta acquired Moltbook, the AI agent social network that went viral because of fake posts - the irony writes itself: Facebook was already well on its way to becoming Moltbook organically.

On the building side, vibe coding made the New York Times Magazine - not as a curiosity piece, but as a feature. The practice of natural-language programming has crossed from tech-Twitter into mainstream consciousness. And Microsoft integrated Claude Cowork into 365 Copilot, openly partnering with Anthropic - a move that suggests the model layer is becoming genuinely interchangeable. Meanwhile, MiroFish lets you simulate 76 synthetic stakeholders arguing about your brand’s future for five virtual years - for about $15 in API costs. A 20-year-old student built it in 10 days via vibe coding; Shanda’s founder committed $30M within 24 hours of seeing the demo. One user ran a full strategic scenario for a Ukrainian fitness chain under wartime conditions - the kind of analysis that would cost $500K+ from a consultancy.

Then there is the story that perplexed our community. A man named Paul Conyngham claims to have used Claude to design a personalized cancer vaccine for his dog, got it synthesized, and the dog’s tumors are shrinking. Greg Brockman called it incredible. Skeptics called it survivorship bias. The truth is probably somewhere in between, but the mere plausibility of the claim - that a non-expert with an LLM and a sequencing service could produce a functional therapeutic - tells you something about where we are. The barrier was never intelligence. It was access.

Yann LeCun seems to agree that the current path has limits. He announced a €1B seed round for AMI Labs, betting on world models and JEPA rather than LLMs - a B+ valuation for an approach that explicitly rejects the transformer orthodoxy. Whether he is right or early, it is a significant signal that the field is diverging. Karpathy, meanwhile, released AutoResearch: a 630-line Python script that runs autonomous ML experiments overnight - proposing changes, running experiments, evaluating results, and committing via Git. 50 experiments on one GPU while you sleep. The goal, in his words: “engineer your agents to make the fastest research progress indefinitely and without any of your own involvement.”

There is the question of what this does to the human on the other end. Designer Matt Jones wrote a beautiful piece about working with agents he calls “Gas Town and Bullet Hell” - the feeling of managing parallel AI processes that each need attention at unpredictable intervals. Wall clock time stops meaning what it used to. A new BCG/HBR study found that workers supervising multiple AI agents report 33% more decision fatigue and a distinctive mental fog researchers are calling “brain fry.” Steve Yegge, who built Gas Town (an orchestrator for 20+ parallel coding agents) described the same thing as “nap attacks.” The connection to bullet hell arcade games is apt: same information overload, but in danmaku it produces flow states.

You are not doing one thing anymore. You are tending a garden of processes. Maybe that is what the people in Dolores Park are doing. Not babysitting. Gardening.

MZ

Envisioning researches emerging technology by combining multiple AI models to produce higher-quality analysis than any single model can deliver. Curious how it works? Join us for a demo of our Signals platform on March 31.

We are also excited to be working with Brasil Futures Fórum which is taking place on August 23/24 in Rio de Janeiro. We’ll have a lot more to share about this collaboration in coming months, but if you are considering visiting Rio, here is your chance.

The biggest bottleneck to scaling compute (2h30)

SemiAnalysis CEO breaks down the $600B capex wall: 20 gigawatts deploying in the US this year, but most of the big tech spending is deposits on 2027-29 capacity. The real bottleneck is power and physical infrastructure.

Inside a16z’s Top 100 AI Apps Report (38 min)

Olivia Moore walks through the latest data. ChatGPT is 30x larger than Claude on web, but usage is diversifying fast. Claude is winning prosumer, Gemini correlates with image model releases, and only 10% of the global population uses ChatGPT weekly.

The Future of Brain-Computer Interfaces (53 min)

Max Hodak (Neuralink co-founder, now Science) on the first retinal implant giving blind patients sight — 40+ people treated, NEJM-published, approval pending this year.

Google Labs Tools Update (31 min)

Richard Wolfe flagged this walkthrough of the current state of Google Labs tools.

Twitter Signals

A WASM interpreter hard-coded into transformer weights — a computer literally running inside an LLM, executing deterministic code at inference time. @joemccann

Patrick Collison’s nuanced take on the viral dog cancer mRNA story: the tech is promising, but “the emergent system of regulators and manufacturers is indeed far too conservative.” @patrickc

“Knowledge is almost worth zero in the AI era. What matters now is connecting the dots and executing fast.” @shiri_shh

“At this rate everyone’s gonna have their own app and zero users.” The vibe coding reality check. @thesayannayak

“idk how to explain this but OpenClaw is for the same people who use Notion.” @tekbog

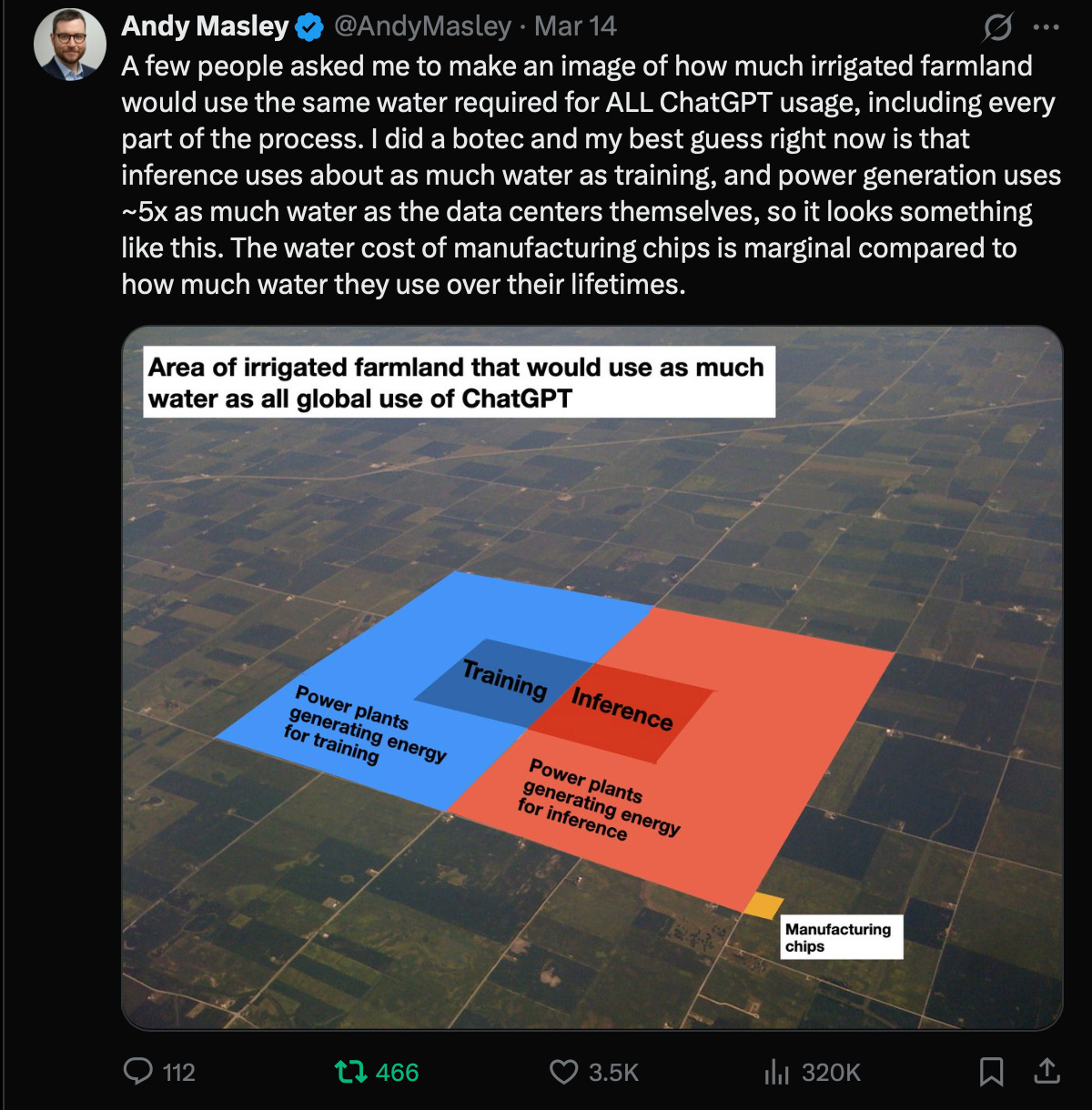

ChatGPT’s total water usage contextualized against irrigated farmland — the numbers are not what the panic suggests. @AndyMasley

AI capex is hitting a wall: implied spending of ~$10T/year by 2030 would consume 100% of hyperscaler free cash flow. @ramez

“Stop designing businesses for 2026 scarcity. Design for 2030 abundance.” @PeterDiamandis

Quick Links

Why the ATM didn’t kill the bank teller — Historical pattern for the AI-and-jobs conversation;

Agents of Chaos — Researchers studying AI agent failures built their website with… AI agents;

malus.sh — A satirical site-as-statement against AI-assisted clean room implementations;

pencil.dev — New AI design tool;

Quaise — Geothermal energy by vaporizing rock;

Amazon addresses AI-related outages — Internal meeting to address growing pains.

If Artificial Insights makes sense to you, please help us out by:

📧 Subscribing to the weekly newsletter on Substack.

💬 Joining our WhatsApp group.

📥 Following the weekly newsletter on LinkedIn.

🦄 Sharing the newsletter on your socials.

Artificial Insights is written by Michell Zappa, CEO and founder of Envisioning, a technology research institute.