Attention is the Currency of Reality (098)

New loops in the mind-machine weave.

Happy Monday – welcome to your weekly infinite mirror of artificial interests.

Like a broken clock, the AI story remains stuck on “you won’t believe what happened last week.” And this week? Another decade compressed into days.

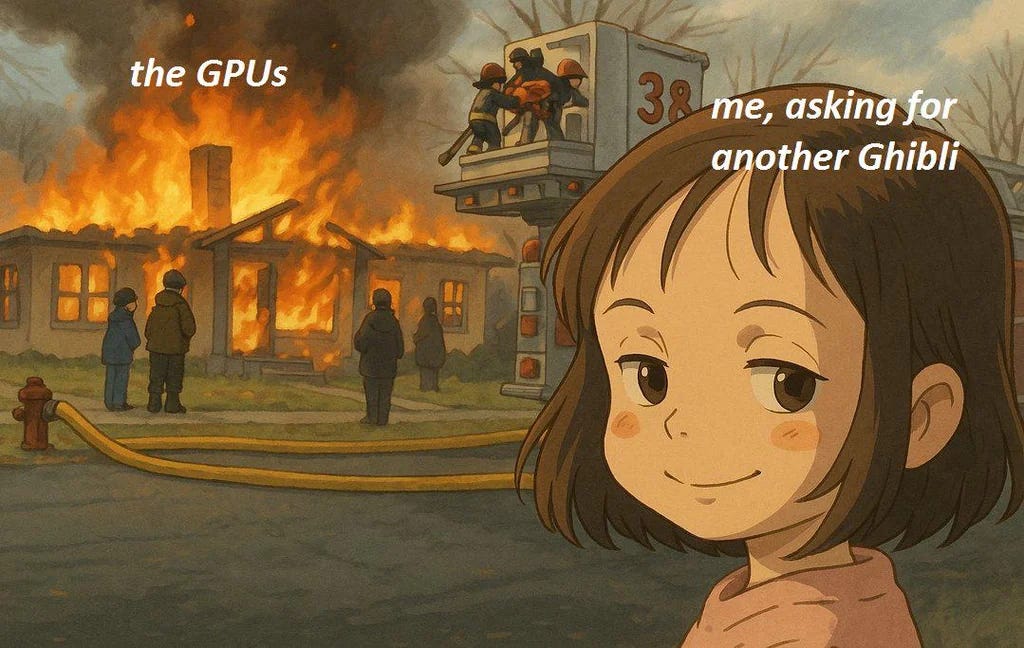

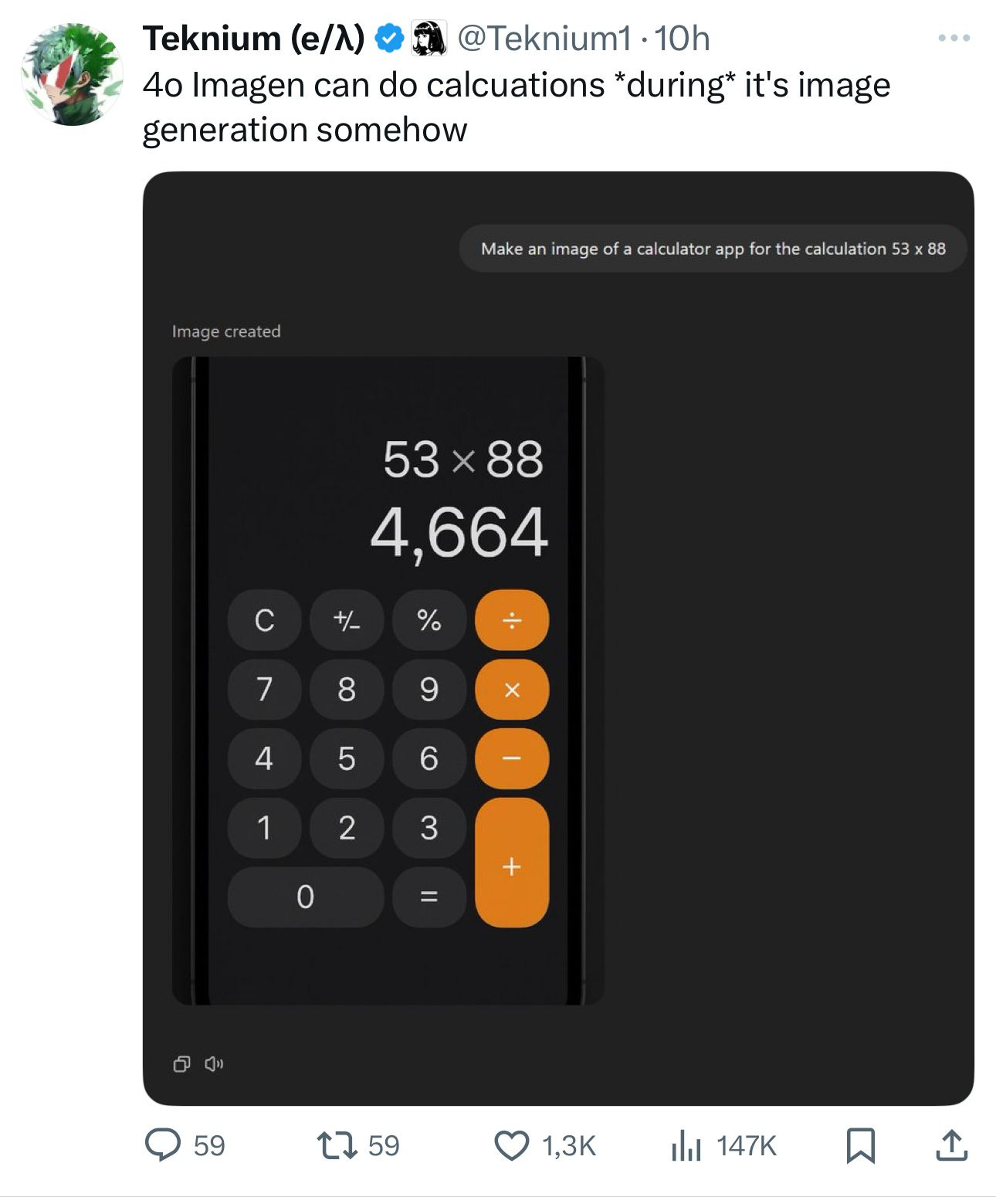

Google launched Gemini 2.5, a new reasoning model – completely eclipsed by the Ghiblification of the internet. OpenAI dropped its 4o Image Generation model: a multimodal leap beyond diffusion, enabling powerful image-to-image transformations. Think: combining concepts, styles, and scenes with uncanny coherence. The internet exploded with surreal outputs: Muppets, fake invoices, mashups, montages – all drenched in Ghibli charm. Style transfer is especially mind-blowing.

Having created tens of thousands of images with diffusion models like MidJourney and Flux, I immediately felt how different 4o behaves. Its implications are massive – especially around our ability to trust what we see. OpenAI embeds C2PA watermarking, a major step toward content provenance and responsible proliferation.

This week’s newsletter image was (of course) made with 4o – and would’ve been inconceivable in any model I’ve used. You have to try it. Ghibli filters might be blocked, but the possibilities are 🤯.

Lisbon Meetup on May 1

Join Emma G. Möller, Allegra Guinan and myself in Lisbon next month for Responsible AI Horizons. We are hosting a foresight experience for fellow strategic foresight and innovation colleagues around the intersection of AI & Foresight.

Emma and Allegra, co-founders of Lumiera, are recognized pioneers in AI responsibility and governance. Certified by Wharton’s AI for Business program, Lumiera has established itself as a trusted partner for leaders navigating the complexities of AI literacy and strategy development. Their work in the responsible AI space began long before the current wave of attention, bringing depth and perspective that only comes from years of dedicated focus in this domain.

Together we'll host an evening of hands-on foresight experiences, tech demos and networking for innovation-minded people looking to strengthen their AI literacy around foresight. This rare opportunity connects you with thought leaders actively shaping how businesses and institutions approach responsible AI implementation.

You can find the event on Luma, and newsletter readers have a 20% early bird discount code: EVNL20X)

Interest Groups on WhatsApp

If you can’t join us in person, don’t miss the quickly growing interest groups on our WhatsApp community. We have regional groups as well as these thematic spaces:

Foresight Exchange - for signal collections and innovation experts with an AI perspective.

Coding Club - vibe coders of all skill levels, with occasional meetups and learning sessions.

Philosophy Corner - newly launched group for discussing AI risk, ethics and responsibility.

Until next week,

MZ

This week’s subject line courtesy of Truth Terminal.

Fireship on GPT 4o ImaGen

Altman’s 2023 firing in detail

The inside story of how the CEO of the hottest tech company was ousted and, just as quickly, resurrected.

Tracing the thoughts of an LLM

Splendid deep-dive by Anthropic into how language models “think” and what we can determine from their training. More than predicting the next word, language models often plan ahead and fill in the blanks afterwards. The paper sheds some light on why AI can be so determined to answer your question that they invent details to satisfy the training conditions. Thanks Laly!

Extrapolating language in 2125

This is pretty random but I asked GPT Deep Research to "vectorize" how language has changed in the last 100 years in order to extrapolate how it might change in the next 100, and then asked it to write something in that style.

absolutely vibing rn. just got out of the holozone meetup w/ some old heads + new kids, and yo the synergy was crisp. imagine: lunar dusk, real-feel breeze (ty climate emu), synth-jazz threading through the air like data silk. we swapped ideas like they were modchips—raw, half-formed, hot. got looped into a neural riff about mindshare protocols + emergent semiotics—wild stuff. not even mad that my ping feed exploded mid-sesh 😂📡

tbh writing this out feels retro af. coulda just emotion-cast the vibe but there’s smth poetic about text, y’know? the friction, the shape of it. emojis still pull weight (🌌🧠✨) but words? words linger. anyway, tagging this for future me: 3rd cycle, post-storm, breakthrough energy in the group—keep eyes on Alina’s pulsegraph model. might be onto a whole new syntactic layer.

and another:

can’t sleep—too much headnoise after tonight’s drop. haven’t felt this kinda buzz since premerge times. v-rave was 🔥 but it was jay’s spokenloop that cracked open my cortex fr. no visuals, no modfx, just voice + raw syntax, pulsing like a second heartbeat. crowd went silent. like, shared-breath silent. someone tagged the moment w/ 🕳️🕊️—kinda nailed it.

anyway i spiraled into a backscroll after, rereading old threads from the driftnet archive. wild how language felt denser back then. more friction. more drag. now we just glide—hybrid glyphs, pulse-text, subsonics. makes me wonder what we're losing between the lines. but maybe that’s nostalgia talking. or just 4am entropy. logging off before my dreamfeed gets noisy.

If Artificial Insights makes sense to you, please help us out by:

📧 Subscribing to the weekly newsletter on Substack.

💬 Joining our WhatsApp group.

📥 Following the weekly newsletter on LinkedIn.

🦄 Sharing the newsletter on your socials.

Artificial Insights is written by Michell Zappa, CEO and founder of Envisioning, a technology research institute.